Scientific visualization

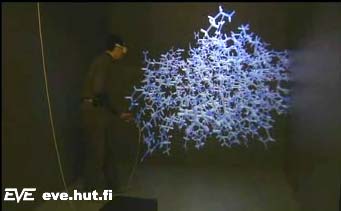

Examining a molecule (00:17, 1.08 MB)

The visualization of complicated processes, like air/fluid flow or intermolecular bindings is a very difficult task on any environment. On the EVE, we can utilize the immersion created via stereo vision, wide field of view, 3D sound effects and the processing power of the Onyx2, as well as different navigation and interaction techniques, to give life to these processes. We can help the researchers obtain a clearer view of the processes by interacting with and navigating in them in real time. Currently, most of the visualization tasks are done with pre-calculated numerical data due to the complexity of the calculations needed to simulate the process, e.g. in flow visualization.

Architecture

Architectural visualization (01:18, 2.15 MB)

Architects and the building industry have during the past few years undergone a change in their working methods due to moving from 2D to 3D. The architects now tend to have an exact 3D model of a building before the construction is even started. The model can be interacted with on a computer screen or a walkthrough may be rendered off-line and shown to the client. Although this is better than the 2D blueprints of the past, we can still do better with the EVE. No computer screen allows the client to move freely inside or outside the building so that he/she can feel the actual dimensions and different lighting conditions in real 1:1 scale. In the EVE, we can visualize the architecture, lighting, acoustics, and - with proper data - even the heating, plumbing and air-conditioning solutions.

Advanced interaction techniques

Virtual air guitar (00:15, 566 kB, Audio)

Since the EVE allows such a wide variety of applications, the interaction and navigation methods must be carefully studied. We are currently using a 6-sensor, 6 degree of freedom, magnetic tracker, which can be used in many types of interaction. For example, we have created a wand-like device using a cordless (trackball) mouse with 3 buttons and one tracked sensor. The wand is currently used in navigation, but can be easily adapted to picking and interacting with things. We also have a pair of fiber-optics-based gloves, that can also be tracked. The Linux-PC controlling the audio and the gloves is equipped with a speech-recognition software, so speech commands can also be utilized when working in the EVE. Studying different interaction methods is a constantly evolving process, since every project has different interaction needs. However, we are working to achieve a standard platform with a selection of well-designed methods of interaction, that can be used in future projects.

Simulators

Simulators are used by military organizations - as well as many others, like automotive, building and aircraft industry. The potential savings utilizing simulators can be enormous considering the typical size of the projects on these branches. For example flight simulators have been constructed since the beginning of the 20th century, since training pilots using real airplanes is very expensive. We have done research on how the EVE virtual room could be utilized in simulator use. The EVE offers a very wide field of view (270 degrees horizontal from the center), and for example in vehicle simulation a vehicle mock-up can be fitted into it quite easily. Although an EVE-like system does not come cheap, it can be a viable option when choosing a generic simulator platform, especially considering the savings achieved using simulators.

Orchestra conductor following

A virtual orchestra (01:43, 3.14 MB, Audio)

We believe new - deeper and more meaningful - interaction methods are needed in the world of computing. Musical conductor follower is test case where natural gestures are used to convey meaning. This highly physical way of communicating is challenging problem for information technology. In this case, it must be the machine that adapts to the user and not the other way around. In our system the user has role of orchestra conductor. A baton in hand he can control the virtual band. The motion is analyzed with artificial neural networks and heuristic rules to extract information. Music is then played according to this interpretation. The implied nuances are added to the music in real-time to make the system more artistically relevant.

|

More videos...

A lecture hall at HUT (00:40, 1.50 MB) |

More lecture hall (01:26, 2.94 MB) |

Helma - Drawing in the air (03:43, 3.78 MB) |

The finished drawing (00:16, 551 kB) |

Animaland - interactive particle animation (04:29, 7.98 MB) |

More animation with Animaland (07:47, 26 MB, Audio) |

A virtual xylophone (00:30, 952 kB, Audio) |

A virtual, adjustable drum (00:51, 1.61 MB, Audio) |

A virtual aquarium (01:09, 2.47 MB, Audio) |

Walking inside a molecule (00:28, 2.58 MB) |

|